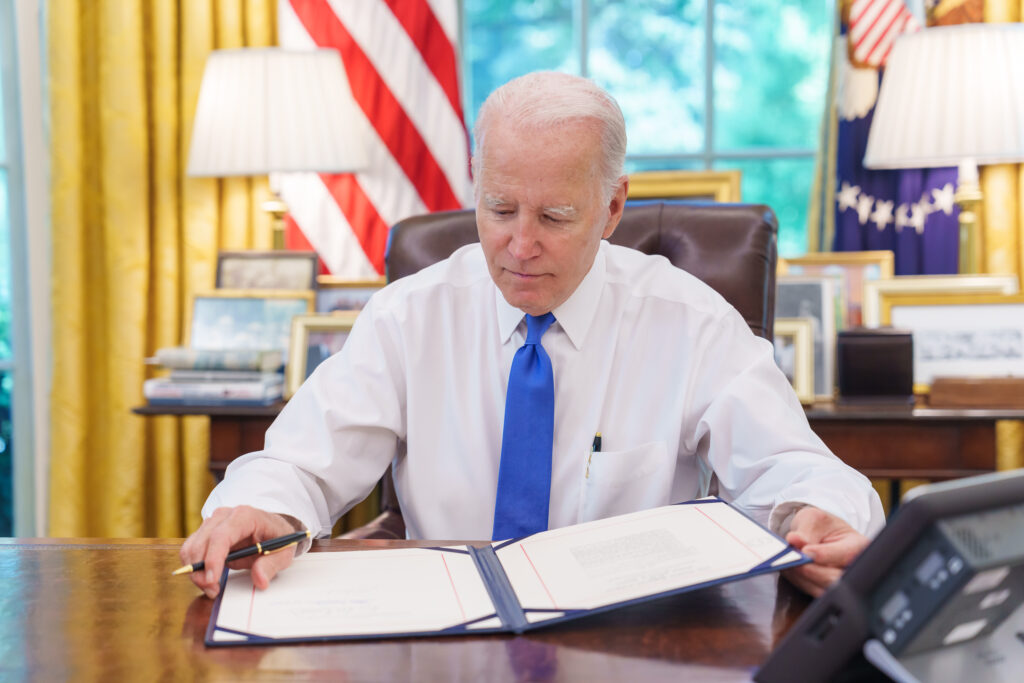

The recent weeks have borne witness to a cascade of pivotal events for artificial intelligence (AI) governance. Over the last few days, US President Joe Biden signed an Executive Order on regulating and funding AI, the G7 published an AI code of conduct, and the UK held the first-ever AI Safety Summit. In 2022 alone, 127 countries passed 37 AI-related laws, highlighting the urgent and quickly evolving landscape of AI regulation. Despite debates between major technology companies and EU member states, the EU’s much-anticipated AI Act is also now nearing agreement carrying with it major implications for the bloc. Thus, now is a useful moment to review the most recent global developments in AI regulation.

Key players and key topics today

The new regulatory dynamics revolve around two key features of AI policy: safety and trustworthiness. The UK AI Safety Summit, the US Executive Order, and the G7 Code of Conduct have crystallised key themes important in discussions on AI regulation. These span from the intricate challenges posed by cutting-edge AI models to the pragmatic steps governments are taking to ensure safety, security, and international collaboration.

At the heart of the UK AI Safety Summit were discussions concerning the opportunities and risks posed by frontier AI models: complex entities potentially gaining autonomy, and challenging human control. Delving beyond the application-specific concerns, the summit underscored the need to address risks emerging during the training and development phases. The summit emphasised the responsibility of developers to act transparently and accountably, underscoring the imperative to mitigate risks associated with cutting-edge AI technologies. The event culminated in the Bletchley Declaration, signed by 28 countries, signalling a commitment to ongoing global cooperation around AI risks, the establishment of the AI Safety Institute, and achieving unanimous acknowledgment of the necessity for continued research to ensure the future safety of AI. Importantly, despite increased tensions between China and the US regarding AI, both nations signed the Declaration.

In many respects, China is blazing a trail when it comes to AI regulation: it introduced its latest AI law in August 2023 creating concrete requirements for how algorithms and AI systems are built and deployed domestically. While there are features in common with policies being pursued by other states revolving around safety and transparency through control, China has pursued additional features such as requiring that content does not violate censorship controls and the labelling of deepfakes by generative AI.

Meanwhile, the US Executive Order took a focused approach to AI safety and security. With an eye on critical infrastructure, the order emphasised the development of standards to fortify the nation’s vital systems against potential AI-related threats. Introducing the innovative practice of “Red-teaming,” the order mandates stress-testing of foundational models with significant national security implications, encouraging transparency in the evaluation process. Notably, the Executive Order aims to propel technological advancements in identifying, authenticating, and tracing content produced by AI systems. Simultaneously, it champions the promotion of innovation and competition in the AI and related technology sectors.

The G7 Code of Conduct emerges as a pivotal international initiative, providing a voluntary framework aimed at organisations across academia, civil society, the private sector, and the public sector. Applicable at all stages of the AI lifecycle, the code seeks to cover design, development, deployment, and use of advanced AI systems. Crucially, it complements the EU AI Act’s legally binding rules, signalling a collaborative effort to harmonize global standards. With a focus on offering detailed and practical guidance, the G7 Code of Conduct strives to shape responsible AI practices across diverse sectors, fostering a unified approach to the ethical development and deployment of artificial intelligence.

Proposed in April 2021, the EU AI Act regulates the sale and use of AI in the EU. It ensures consistent standards across member states to protect citizens’ health, safety, and rights. While not addressing data protection or content moderation, the Act complements existing laws and follows a risk-based approach. It is applicable to global vendors serving EU users, thus it extends its reach beyond developers and deployers within the EU. This expansive scope reflects the Act’s ambition to regulate AI activities on a global scale.

AI policy at the intersection of policy areas

AI policy extends its threads into diverse policy domains, intertwining with matters of security, safety, and societal well-being. As AI technologies grow, so do the multifaceted challenges that span a spectrum of severity and urgency. From the pressing issues of disinformation and public service bias to the more ominous threats of advanced cyber-attacks and the potential misuse of AI for terrorism purposes, the value of AI policy for cybersecurity becomes evident.

Striking a delicate balance between technological innovation and safeguarding human rights is a paramount challenge, as the quest for AI that respects human rights and upholds democratic principles remains a work in progress. Today AI has unleashed potential in education, healthcare and within the criminal justice system. For example, tools powered by AI can help doctors analyse a patient and suggest diagnoses as well as support the patient in their daily life. However, this comes at a risk of patient harm and gaps in accountability and transparency. Altogether, the absence of robust regulations risks giving rise to socioeconomic disparities and ethical quandaries.

AI and the future of work

UK Prime Minister Sunak and businessman Elon Musk discussed the impact of AI on the future of work in one panel at the UK Safety Summit. It is expected that AI will render some jobs obsolete, while birthing new roles and reshaping the nature of existing ones, signalling a dynamic and transformative future of work. Meanwhile, the US Executive Order contains provisions on the impact of AI on the labour market and workers’ rights, and includes principles and best practices for workers.

The risk of job automation exists for repetitive and structured tasks in predictable environments where clear input-output relationships exist and outcomes are easily visible and quantifiable. While AI excels in replicating routine tasks, persuasive capacity and originality remain challenging for AI to emulate. It was acknowledged that AI is poised not to replicate but to substitute certain aspects of human intelligence. It is plausible to expect AI to become a commodity – increasingly accessible and cost-effective – thus fundamentally reshaping certain industries.

Implications for Governments

- Relations with technology companies: through these policies and initiatives governments are deepening collaboration with the private sector. Until now, safety testing of new AI models is primarily conducted by the companies developing them, by introducing external scrutiny, governments are in charge of a more transparent and trustworthy evaluation process.

- Regulations or guidance: the proliferation of binding policies and voluntary codes is a pressure for governments to decide whether to move beyond mere guidance and work towards a cross-cutting AI law. Should many governments pursue an enforceable framework, they can ensure a more effective and harmonised approach to addressing safety and trustworthiness in AI development and deployment adequate and binding international agreement on AI ethics.

- Public awareness and trust: Governments could invest in campaigns to educate citizens about the benefits, risks, and ethical considerations of AI. This could involve partnerships with educational institutions and industry experts.

- Agility and evaluation: Given the rapid evolution of AI technologies, governments need to adopt flexible policies that can adapt to new developments. Continuous evaluation and updates to AI policies will be essential to address emerging challenges and opportunities.

Implications for Private Firms

- Compliance: Firms are called upon to proactively adopt and adhere to new regulation and to contribute to safety and transparency, also in a harmonised global approach. Engaging in enhancing the security aspects of their AI technologies will require significant adjustments, these may include strengthening ethics and compliance procedures within the firm.

- Employee development: AI policies may require businesses to adapt their workforce and invest in employee training for AI-related skills. This includes upskilling or reskilling employees to work alongside AI technologies and addressing potential job displacement through thoughtful workforce planning.

- Investment: Investors are becoming more aware of the regulatory environment surrounding AI. Firms that can demonstrate a proactive approach to compliance may attract more investor interest. On the other hand, non-compliance could lead to legal challenges, fines, and damage to a company’s reputation.

- Government relations: Public-private partnerships can contribute to AI safety and security. Firms should be prepared to engage in these collaborative efforts, contributing expertise to shape, for example, standards or policy updates.

In sum, governments are likely to deepen collaboration with tech companies to ensure transparent AI testing. The proliferation of policies pressures governments to consider enforcing cross-cutting AI laws for a harmonised approach. Public awareness campaigns are a strategy to educate citizens about AI, while agile and continuously updated policies are crucial. For private firms, proactive compliance, employee development, and engagement in public-private partnerships are emphasised to navigate the evolving AI landscape.